GitHub Repository

Pull Request to merge Docker Container code with main:- https://github.com/Lambda-School-Labs/scribble-stadium-ds/pull/75

Pull Request to create data pipeline to generate Tesseract’s training dataset with main:- https://github.com/Lambda-School-Labs/scribble-stadium-ds/pull/60

What is Story Squad?

Story Squad is a startup by Graig Peterson, a former teacher, to create games/competitions for today’s children and keep them off-screen. Children compete with each other by writing stories and drawing part of their stories. Here’s how it works: child users of the website are provided a new chapter in an ongoing story each weekend. They read the story and then follow both writing and drawing prompts to spend an hour off-screen writing and drawing. When they’re done, they upload photos of each, and this is where our data science team comes in. The stories are transcribed into text, analyzed for complexity, screened for inappropriate content, and then sent to a moderator. The transcription occurs through Tesseract OCR which is a free resource and Story Squad has generated data to train the custom model. Once all submissions have been checked over on moderation day, our clustering algorithm groups the submissions by similar complexity and creates Squads of 4 to head into a game of assigning points and voting for the best submissions in head-to-head pairings within the cluster! Then it starts all over again the following weekend.

Infrastructure as a Code (IaC)

Story Squad was using free tier Amazon Elastic Compute Cloud (Amazon EC2) to run virtual machines to train custom Tesseract OCR model. It was necessary that each person has their own machine to preserve the work from each team member for hyperparameter tuning. The issue with using EC2 is that the EC2 images required to be cloned by our Data Science manager every time a new person was added to the Machine Learning team. This was not only time-consuming to get another image credential from the Data Science manager but also required pulling all the data from the GitHub repo to get the data to train and make a new model.

One of our team members also encountered issues with maxing EC2’s ram which is 1 GB for the free tier. She was the one who proposed to set up a Docker container. I took up the initiative to create a Docker container to pull an Ubuntu 18.04 image and build Tesseract OCR on it. The solution provides any incoming Machine Learning engineer to build their own local machine within minutes.

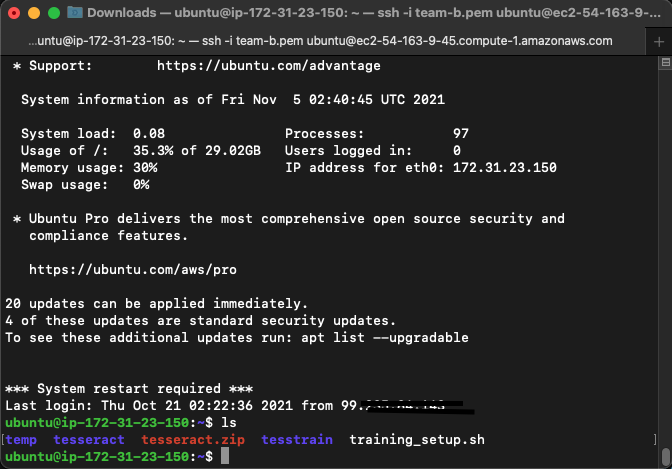

EC2 instance landing page after ssh login Screenshot

EC2 instance landing page after ssh login Screenshot

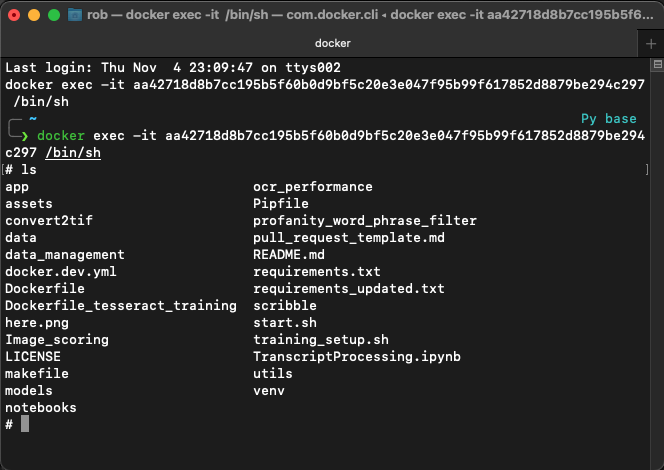

Docker Container CLI Screenshot

Docker Container CLI Screenshot

Finding the data itself was a big hurdle in this project. The data must provide a learning opportunity and at the same time, Machine Learning practices can be applied on it. The data must have enough observations to devote time to. At least 100,000 so that there are enough observations to train, validate and then test. On the other hand, the data must relate to something that can tie into a business case.

After much investigation, it was finalized to take up the topic first to predict Rainfall. Precipitation was the only thing that was closest to the topic of interest.

NASA maintains an app called POWER Single Point Data Access 1 that provides data in JavaScript Object Notation (JSON) format through an Application Programming Interface (API) to users based on the geographical location of interest. NASA’s POWER app requires latitude and longitude to provide the weather information. The latitude and longitude of various countries were gathered using an existing CSV file from GitHub user albertyw 2.

A program was written in python notebook that takes in the latitude longitude information from the country list, pass it to NASA’s app to fetch the following information:-

- Lattitude (degrees in decimal)

- Longitude (degrees in decimal)

- Elevation (m)

- PRECTOT - Precipitation (mm day-1)

- QV2M - Specific Humidity at 2 Meters (g/kg)

- PS - Surface Pressure (kPa)

- TS - Earth Skin Temperature (C)

- T2MDEW - Dew/Frost Point at 2 Meters (C)

- T2M - Temperature Range at 2 Meters (C)

- WS50M - Wind Speed at 50 Meters (m/s)

- WS10M - Wind Speed at 10 Meters (m/s)

- T2MWET - Wet Bulb Temperature at 2 Meters (C)

- T2M_RANGE - Temperature Range at 2 Meters (C)

- RH2M - Relative Humidity at 2 Meters (%)

- KT - Insolation Clearness Index (dimensionless)

- CLRSKY_SFC_SW_DWN - Clear Sky Insolation Incident on a Horizontal Surface (kW-hr/m^2/day)

- ALLSKY_SFC_SW_DWN - All Sky Insolation Incident on a Horizontal Surface (kW-hr/m^2/day)

- ALLSKY_SFC_LW_DWN - Downward Thermal Infrared (Longwave) Radiative Flux (kW-hr/m^2/day)

The python code also merges the country code, latitude and longitude data to make a single data frame for use. The data included weather data for each day for 240 countries from 1980 to 2020. The resulting dataset had 3506400 rows × 22 columns.

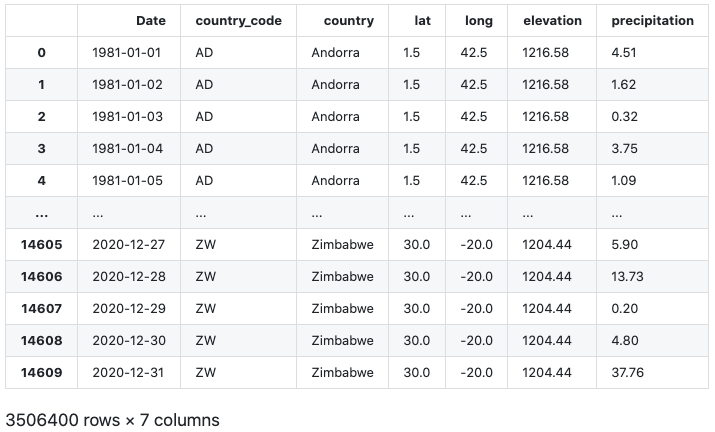

NASA-POWER-DataFrame

NASA-POWER-DataFrame

The data frame built was used in the research further. The learning to now be able to use JSON data available publically, send JSON requests, receive and interpret and convert them to pandas DataFrame was a small achievement in the machine learning model.

EDA and Machine Learning Model

Data from NASA’s application was cleaned and unnecessary or repetitive columns were dropped. Before getting into features and target selection, another feature was included in the data that should affect the Precipitation of any interest area. Based on a hypothesis that Precipitation will be higher in countries with more forest areas, Forest Area data from World Bank 3 was imported in the data frame as a feature through pandas merge function.

Due to resource limitations and quick turnarounds in model training, only the last twenty years of data were considered.

The data was ready for some Exploratory Data Analysis (EDA). Pandas Profiling was used to generate a report to see the type of data, missing values and data distribution.

The features were selected to be the following:-

- country_code

- lat

- long

- elevation

- surface_pressure

- skin_temperature

- dew_frost

- temperature2m

- windspeed10m

- windspeed50m

- wet_bulb_temp

- temp_range

- clearness_index

- clear_sky_insolation

- all_sky_insolation

- radiative_flux

- Forest_Cover(sq km)

The definition of all the features mentioned above was provided in the text above. Precipitation was chosen as the target.

The target was skewed to the right due to the presence of some 300 observations.

Baseline

Precipitation mean was chosen to set a baseline to compare the model performance. Precipitation mean was calculated for the entire data and was determined as 2.787 mm. Mean Absolute Error (MAE) was calculated and was found to be 3.379 mm. The baseline MAE is used to compare various models to see how each model fare in precipitation predictions.

Models

The model pipelines that use more time and resources in fitting the data frame from 2001 - 2020 was split as follows:-

Train - Data from 2008 - 2012 Validate - Data from 2013 only Test - Data from 2014

The various models run and their findings are discussed below:-

1. Ordinal Encoder and RandomForestRegressor pipeline

The code to instantiate and fit the pipeline was as simple as:-

1

2

3

4

5

pipeline_randomforest_OE = make_pipeline(

ce.OrdinalEncoder(),

RandomForestRegressor(n_estimators=100, random_state=42, verbose=1,n_jobs=-1)

)

pipeline_randomforest_OE.fit(X_train, y_train)

Since the data was super clean with no missing values, compute or scaling were not used.

Parameters to benchmark the model:-

| Parameter | Value |

|---|---|

| Time to fit the model | 22 sec |

| Training Score (R2) | 93.70 % |

| Validation Score (R2) | 44.02 % |

| Test Score (R2) | 44.22 % |

| Baseline MAE | 3.379 mm |

| Model MAE | 2.198 mm |

| Improvement over Baseline MAE | 53.73 % |

2. OneHotEncoder and RandomForestRegressor pipeline

The code to instantiate and fit the pipeline was:-

1

2

3

4

5

pipeline_randomforest_OHE = make_pipeline(

ce.OneHotEncoder(use_cat_names=True),

RandomForestRegressor(n_estimators=100, random_state=42, verbose=1,n_jobs=-1)

)

pipeline_randomforest_OHE.fit(X_train, y_train)

Parameters to benchmark the model:-

| Parameter | Value |

|---|---|

| Time to fit the model | 222 sec |

| Training Score (R2) | 93.70 % |

| Validation Score (R2) | 47.76 % |

| Validation Score (R2) | 47.30 % |

| Baseline MAE | 3.379 mm |

| Model MAE | 1.965 mm |

| Improvement over Baseline MAE | 71.89 % |

3. OrdinalEncoder and XGBoost pipeline

Before the XGBoost pipeline can be instantiated and fit, train, validation and test dataset were updated as follows:-

Train - Data from 2001 - 2012 Validate - Data from 2013 - 2016 Test - Data from 2017 - 2020

This was done as XGBoost is able to fit the model much faster as compared with the RandomForestRegressor.

The rest was similar to what was done in the past. The code to instantiated and fit the pipeline was:-

1

2

3

4

5

pipeline_xgboost = make_pipeline(

ce.OrdinalEncoder(),

XGBRegressor(n_estimators=100, random_state=42, verbose=1, n_jobs=-1)

)

pipeline_xgboost.fit(X_train, y_train)

Parameters to benchmark the model:-

| Parameter | Value |

|---|---|

| Time to fit the model | 11.47 sec |

| Training Score (R2) | 59.00 % |

| Validation Score (R2) | 48.45 % |

| Test Score (R2) | 35.98 % |

| Baseline MAE | 3.379 mm |

| Model MAE | 2.022 mm |

| Improvement over Baseline MAE | 67.09 % |

Analysis

Some correction in Model MAE was expected as RandomForestRegressor tries to fit a model with infinite depth. The model score for the RandomForestRegressor reflects this while the model wasn’t doing very well with the validation score. Another thing to note in the XGBoost model is that the data for a longer duration was used compared to the earlier run models. This may be another source due to which our improvement over the baseline was reduced compared to the previous model.

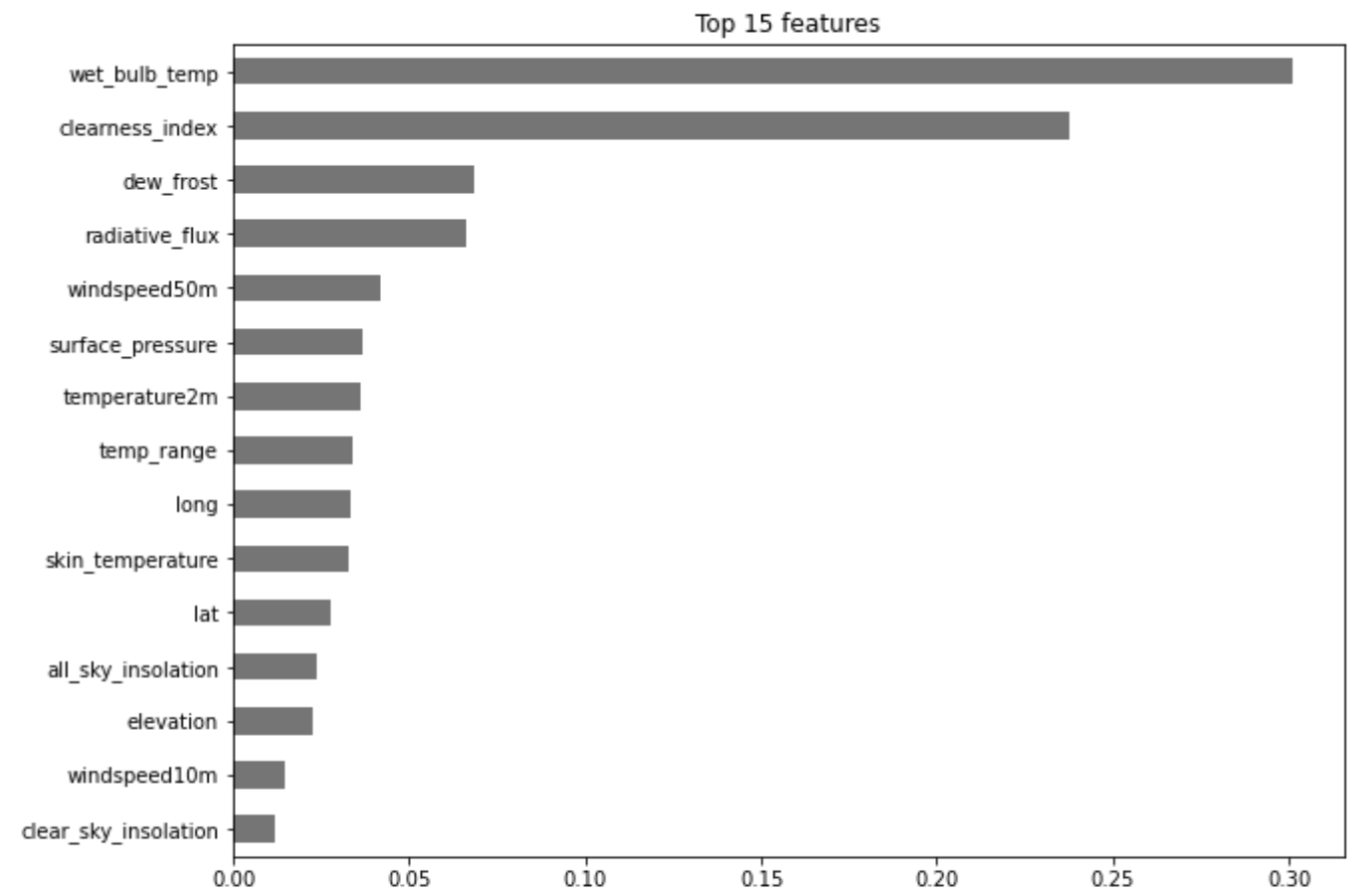

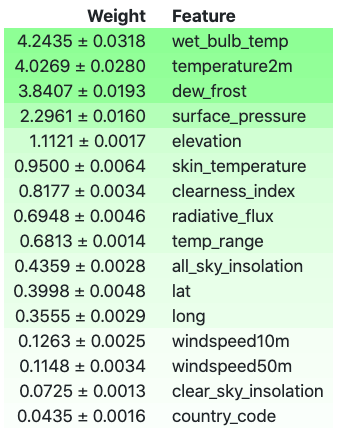

Features Importances from XGBRegressor

XGBRegressor was used to extract the top 15 features that contribute to the prediction of Precipitation.

Top 15 Features as determined after XGBRegressor Run

Top 15 Features as determined after XGBRegressor Run

Permutation Importance from XGBRegressor

Permutation importance provides an insight in ranking the features of the data by permuting different values in any feature. Web bulb temperature still ranks the top in predicting the Precipitation, however, the ranking has changed for other factors, as shown in the output below.

Image Showing Permutation Importance by priority

Image Showing Permutation Importance by priority

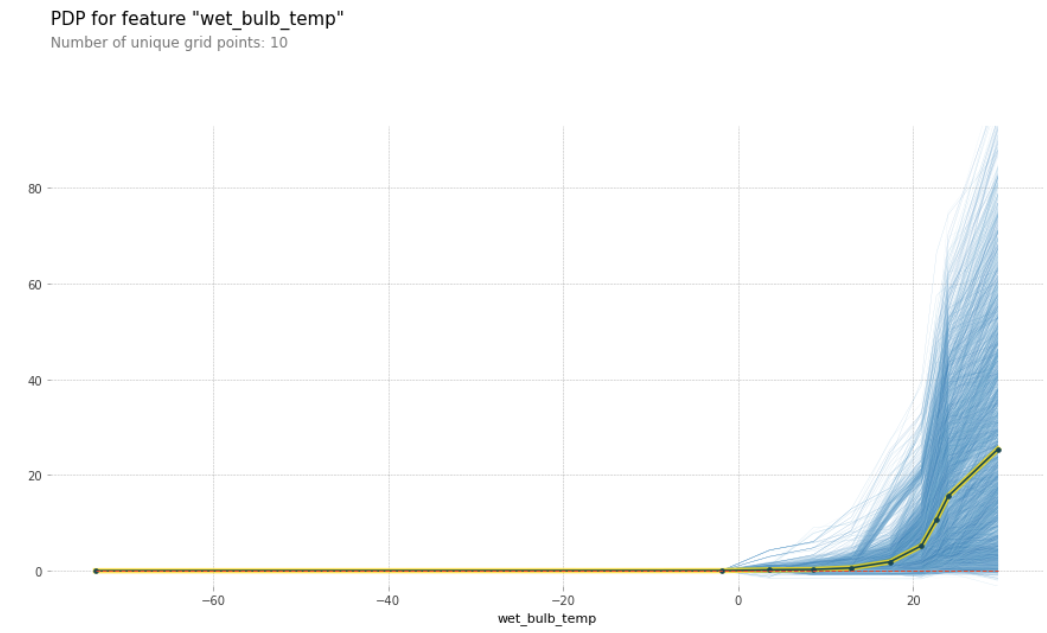

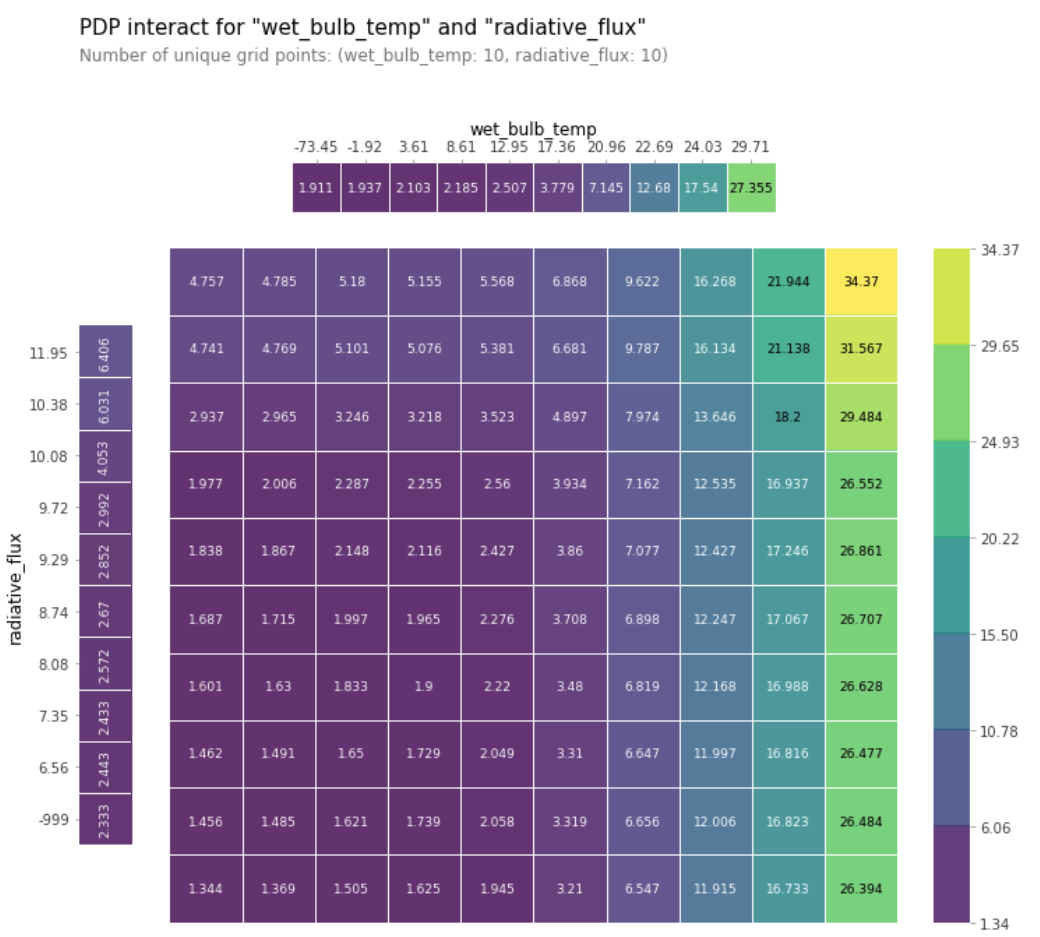

Partial Dependence Plot (PDP)

A Partial Dependence Plot was built to see the effect of more than one feature on the predicted Precipitation. From the PDP, it can be inferred that the relationship between the features and the target is monotonic.

Partial Dependence Plot showing the Variation of Precipitation with Wet Bulb Temperature

Partial Dependence Plot showing the Variation of Precipitation with Wet Bulb Temperature

Partial Dependence Plot showing the Variation of Precipitation with Wet Bulb Temperature and Radiative Flux

Partial Dependence Plot showing the Variation of Precipitation with Wet Bulb Temperature and Radiative Flux

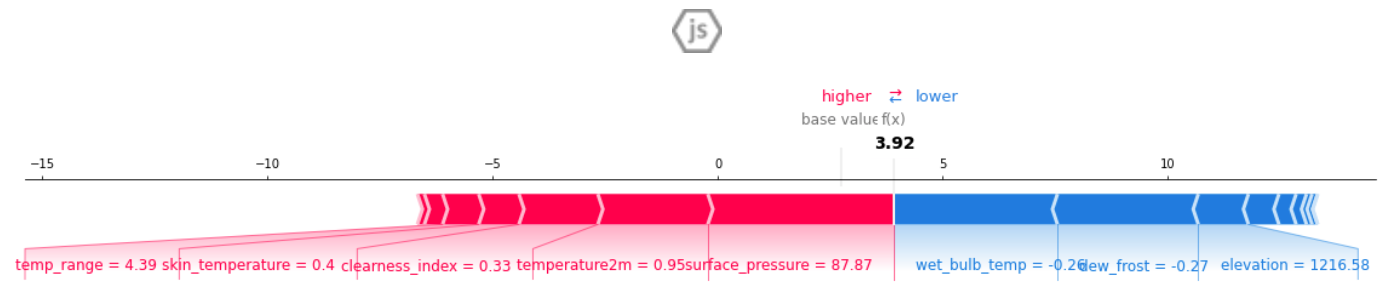

Shap Values

Shap values are what the features contribute to the final predicted value.

Image Showing how Precipitation Changes with Change in Features

Image Showing how Precipitation Changes with Change in Features

Conclusion

Based on all the features used in this research, Temperature was the key feature that predicts Precipitation of any region. With temperatures rising globally due to global warming, the research in this project shows that the precipitation levels are also likely to increase. If it interests you in testing the XGBoost model to make predictions, then use the app in the link below.